KontxtualTM for AI Security provides contextual protection across your AI lifecycle. By connecting data, identity, usage, and risk in near real time, it enables proactive enforcement and visibility from source to model output—so enterprises can scale AI responsibly.

What sets KontxtualTM apart:

AI-Aware Context: Understands the purpose, owner, and regulatory profile of training data.

Unified Governance: Extends DSPM, access intelligence, and policy enforcement directly into AI workflows.

Enterprise-Scale Architecture: Handles petabyte-scale, hybrid, cloud, and federated environments without performance trade-offs.

Holistic, consistent data labeling: Leverage semantic views to apply metadata tags to files on-prem or cloud without impacting Last Accessed, Last Modified, or Date Created fields

Contextualized scanning: Easily view production data in non-production environments to ensure developers are working with anonymized data

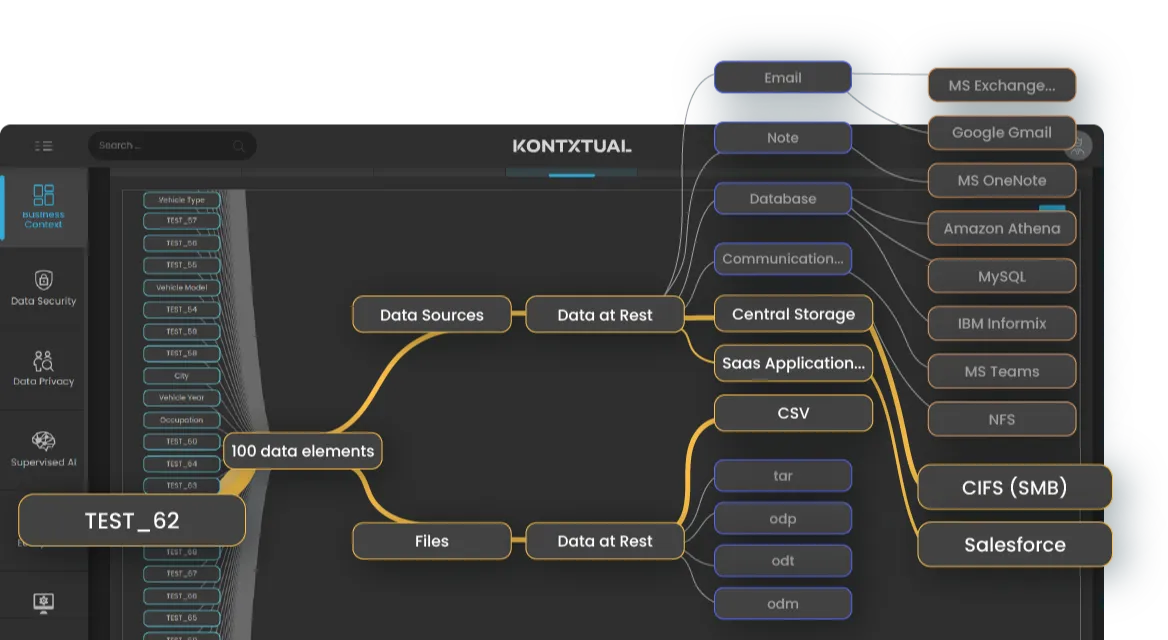

Continuous Discovery & Enforcement: Scans data at rest and in motion, applying real-time, identity-aware policies at the data layer.

Seamless Integration: Works natively with Snowflake, Databricks, BigQuery, Guardium, and Data tools to fit within your existing stack.